You may have heard that Twitter now considers “cis” and “cisgender” to be slurs.

I asked ChatGPT what makes a word a slur. Here’s what it said.

A slur is a derogatory or offensive term used to demean or insult a particular individual or group of people based on their race, ethnicity, gender, sexual orientation, religion, or other characteristics. Slurs are typically intended to belittle, dehumanize, or marginalize the targeted group.

Several factors contribute to a word being considered a slur:

1. Derogatory Intent: Slurs are intentionally used to demean or disparage individuals or communities. The primary purpose of a slur is to insult or offend.

2. Historical Context: Many slurs have a long history of being used to oppress or discriminate against marginalized communities. The historical use and association of a word with discrimination or violence contribute to its classification as a slur.

3. Impact and Offensiveness: Slurs often evoke strong negative emotions and perpetuate harmful stereotypes. They can be deeply hurtful and dehumanizing to those targeted by them, causing psychological and emotional harm.

4. Societal Consensus: The classification of a word as a slur can also depend on societal consensus. The general perception of a term within a community or society, especially among the affected group, plays a significant role in determining whether it is considered a slur.

It is important to note that slurs can vary across cultures, contexts, and time periods. What may be considered a slur in one culture or era might not have the same connotation in another. Additionally, the impact and offensiveness of slurs can evolve over time as societies progress and language norms change.

That seems mostly reasonable, although it misses a key component, namely, what do the people the word is used to describe think of it?

Is “cis” used with derogatory intent? I’m not sure, but I think it’s at least slightly derogatory.

It has no historical context because all this “gender” foolishness started 10 minutes ago.

Is it offensive? I don’t know. Slightly, maybe.

Is there a consensus? How could there be? If you disagree with the trans narrative you get social media murdered.

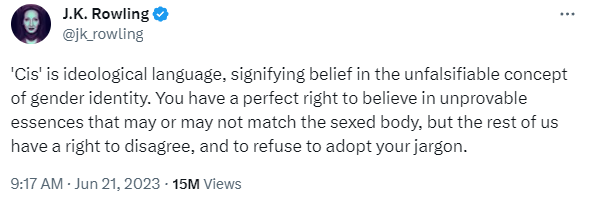

Here’s what J.K. Rowling has to say about the words, although she doesn’t address if they are “slurs.”